Build-time vs. Run-time Gen-AI in Telco: Where Intelligence Belongs Over the Next 18 Months

We are coming out of two years in which Generative AI has been reshaping society from the ground up. It has rewritten how we write, how we code, how we research, how we make decisions, and how we imagine the next decade of every industry. That kind of transformation generates a very natural anxiety: the urge to incorporate AI into as many processes as possible, as fast as possible, before someone else does it first.

Telecommunications networks are not immune to that pressure. But they are particular. They are extremely complex systems: distributed, multi-vendor, multi-domain, multi-generation, with strict SLAs, regulatory obligations, and customers who notice instantly when something fails. The general-purpose intuitions that work in other industries do not always translate well here. And the question that matters in telco is not whether to adopt AI, but where exactly to place it inside an operating system that was never designed to be probabilistic.

That question is not academic. The answer determines how much value AI actually delivers to a critical network, how much risk it introduces, and whether the operator ends up with an operating system they can govern or with a black box they cannot audit.

Two AIs, often confused under one name

Before talking about where to place AI in the network, it is worth separating two things the industry tends to discuss as if they were the same.

The first is what we sometimes call traditional AI: machine learning applied to specific, well-bounded problems such as anomaly detection, forecasting, capacity prediction, pattern recognition, and classification. This is not new, and it is not speculative. It is a reality. There are concrete processes in operations today where it adds clear value, typically running on highly centralized systems with curated data. The challenge with this kind of AI is not whether it works, but how to take it to scale across domains, vendors, and use cases without each implementation becoming an isolated island.

The second is Generative AI. Its arrival was so disruptive that, for a moment, it looked as if every architecture had to be agentic, every workflow had to be wrapped around an LLM, and every operational decision had to flow through an autonomous agent. Two years in, the picture is more nuanced. Generative AI has shown enormous value in some use cases, and pure complexity with little real return in others. The interesting question is no longer whether to use it, but how to tell the two situations apart.

That separation is what leads, almost naturally, to the distinction between Build-time and Run-time AI in network operations.

Two very different roles for the same technology

Run-time AI is what most discussions describe by default. An agent observes the live state of the network, interprets context, makes a decision, and acts. The model lives in the critical path of execution. When a degradation appears at 03:00 AM, it is the AI that decides what to do, often within seconds.

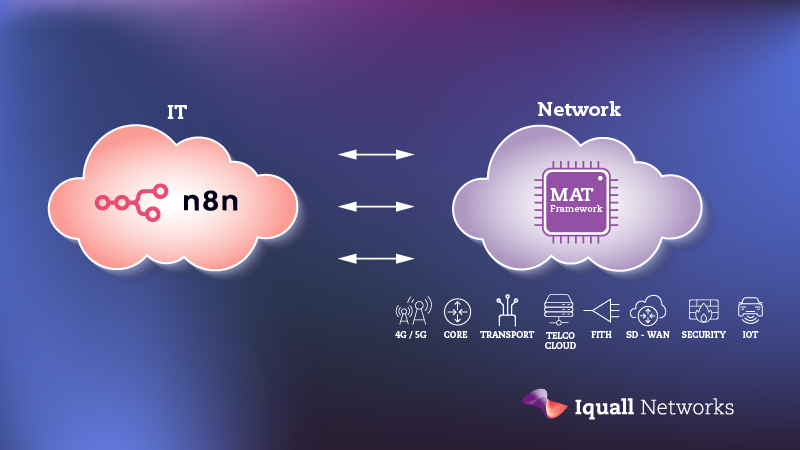

Build-time AI is a different proposition. The AI does not operate the network at the critical moment. Instead, it generates, refines, and expands the operational logic that governed automation will later execute. Playbooks, verifications, policies, remediations, integrations, compliance controls, closed loops: all of these can be produced or improved with AI. But at the moment of execution, what acts on the network is a deterministic, traceable, validated automation.

The shorthand is simple: AI develops automation; automation operates the network.This is not a semantic distinction. It changes what the system actually is.

Why this matters more in telco than in most industries

Run-time AI works well when ambiguity is acceptable, when the cost of an unusual decision is low, and when the system can afford to be probabilistic. Recommendation engines, content generation, customer-service copilots: all are reasonable places for AI to make live decisions, because the worst case is bounded.

Critical telecommunications networks are not those environments. They demand predictability, traceability, deterministic behavior, and the ability to roll back. A modern operator manages thousands of elements across multiple domains, vendors, and technologies, while sustaining strict SLAs and regulatory obligations. In that context, an AI that improvises decisions on the production network is not a feature. It is a governance problem waiting to surface during the worst possible incident.

This does not mean Run-time AI has no place. It does. But its natural territory is narrower than the industry usually admits: ambiguous troubleshooting, contextual enrichment, correlation of dispersed signals, NOC assistance, and exploration of scenarios not covered by predefined logic. Useful, valuable work, but assistance, not control.

The structural problem of the network, turning expert knowledge into operational capability at the pace the business demands, is not a Run-time problem. It is a Build-time one.

The bottleneck Build-time AI actually solves

For most operators, the constraint is not deciding what to automate. It is the speed at which expert knowledge becomes usable automation. That translation has historically depended on long cycles of analysis, development, validation, and integration, all bottlenecked by the availability of senior engineers.

Build-time AI changes that equation. A domain expert can describe an intent, an exception, a new verification, or a recurring failure pattern, and AI can generate the corresponding playbook, policy, or test in a fraction of the time. The expert remains responsible for judgment and validation, but the mechanical work of producing the logic accelerates dramatically.

The consequence compounds over time. Every maintenance window that ends in a rollback contains operational evidence: which change failed, which symptoms preceded it, which metrics deviated. With a Build-time approach, that evidence feeds new verifications, which are then incorporated into the automation system before the next similar window. The test bank grows continuously. The next window arrives with a stronger verification system than the previous one, not because someone wrote a hundred new checks by hand, but because the system learns between windows.

That is a structural advantage. Not a marginal improvement, not a productivity win, but a structural one. The network provides experience; AI converts experience into capability; automation executes with discipline.

A hybrid model, not a binary one

The right framing is not “Build-time good, Run-time bad.” It is that each one belongs in a different place. Build-time AI carries the weight of generating and evolving operational logic, the part of the work where speed and scale matter most. Run-time AI assists where context is genuinely ambiguous and where human operators benefit from real-time analysis. Governed automation remains the only mechanism that touches the production network at critical moments.

When this separation is respected, three things happen. First, the operator captures the generative power of AI without turning execution into a probabilistic black box. Second, the boundary between what AI does and what automation does becomes auditable. Third, governance stops being an obstacle to AI adoption and becomes the platform that enables it.

The strategic implication

The anxiety of the last two years is understandable, and to a large extent it is justified. AI is a generational shift, and treating it as a passing trend would be a strategic mistake. But in telco, the question is not how aggressively to inject AI into the network. It is how to inject it where its strengths line up with what the network actually needs.

The operators that will benefit most from AI in the coming years will not be the ones with the most agents in production. They will be the ones who used AI to compress the distance between operational experience and reusable capability, and who let governed automation, not probabilistic inference, run the network.That is a less spectacular vision than the one currently dominating industry conferences. It is also the one that scales without breaking.

The real question is not how much AI you can put in front of the network. It is how much AI you can put behind it, building the logic that runs when nobody is watching.

This blog entry serves as a preview of the second edition of AI-Driven Autonomous Networks: A Strategic and Technical Guide

By Matías Lambert, CEO & Co-Founder at Iquall Networks

https://www.linkedin.com/in/matiaslambert/